CNI Spring 2026 - Four Bridges

These are personal reflections and opinions, not official positions of my employer or any organization I work with.

I flew out of Boston at 6:15 in the morning, landed in Salt Lake City just before 10am Mountain time, dropped my bags at the Hyatt, and sat down in Regency A for the opening plenary of the CNI Spring 2026 Membership Meeting before I had really registered being somewhere else.

The opening speaker was Rebekah Cummings from the University of Utah (watch the full plenary on YouTube). She set the stage with a frame that felt both hopeful and honest: what libraries stand for, and what libraries are actually good at. Her talk closed with the instruction I carried through the rest of the conference. Get in the room. 90% of the work is just showing up. If an invitation is required, ask for one.

She wasn’t being abstract. At every institution and at every level, AI decisions are getting made in rooms where librarians aren’t showing up. This is a failure of presence. The expertise is already there. Libraries have the values that current AI practice is missing. Consent. Attribution. Privacy. Democratization. Empowerment.

Library workers have the practical skills, too. Information literacy. Evaluating sources. The everyday work of putting values into practice. The arguments already exist. The work is showing up with them.

Cummings also referenced Michael Hanegan and Chris Rosser’s Generative AI and Libraries: Claiming Our Place in the Center of a Shared Future along the way, and their “prophets and priests” framing for AI adoption. Both voices are critical, she argued, for thoughtful implementation.

Doralyn Rossmann is dean at Montana State. She used the London Underground metaphor (mind the gap) to describe what libraries are for in the current moment. Faculty are dealing with urgent, concrete questions. How do I teach with AI now? How do I detect cheating? How do I use this ethically in research? Administrators several levels up often only hear the long-term strategy conversation. The library is close enough to feel the short-term moment, and institutional enough to participate in strategy. That bridging position, she argued, is the actual seat at the table. It doesn’t need justification. It follows from where libraries already sit. Montana State has put money behind the metaphor, too. They’ve stood up a library AI lab staffed with students and existing library faculty.

The afternoon’s first project briefing was on AI in virtual reference. A team from Arizona, Colorado State, Kansas, and Utah State analyzed more than 34,000 chat reference transcripts from several R1 universities. The headline finding, typed into the backchannel with three exclamation points: 38% of chat interactions are complex. Complex enough to require professional judgment.

The most common question types (finding a known journal article, finding relevant sources, interlibrary loan) were also among the most complex, because the conversation that starts with do you have this article? ends ten minutes later on research strategy and access. One of the researchers called chat reference a journey, not a transaction. Vendor narratives that promise to automate chat as a uniform service are selling something the data doesn’t support.

The team shared their Python-and-Gemini transcript-coding pipeline on GitHub, which is worth a look if you think about this kind of work.

UT Austin had spent a charter year methodically evaluating JSTOR’s Seeklight tool for AI-generated metadata. Their field-by-field verdict was honest. Titles were “the most horrifying.” Creator fields often confused the subject of a document with its author. None of the fields hit production quality on their own. But for alt-text, for complex materials like maps and architectural drawings that would otherwise never be described, for the entire category of “collections you never get to,” the tool earns its keep as a first draft that humans then enhance. A colleague from UC Berkeley mentioned in the backchannel that they’ve already deployed Seeklight for their UC Agricultural Extension collection.

Seth Porter’s lightning talk from UC Colorado Springs is the one I carried into the next day. Quantum computing access is exponentially expensive. Cloud platforms like AWS Braket can broker it, but access is usually siloed into individual faculty grants, and most researchers can’t touch it. Porter is building a governance layer. Faculty submit proposals, a committee reviews them, the library allocates compute and tracks cost. The library becomes a neutral broker, lowering the technical floor and spreading capital investment across the institution. The pattern generalizes. It could work for any high-cost, steep-learning-curve category of emerging technology, not just quantum computing.

During the opening plenary, I got stuck on something. The panel was talking about San José State University and its library partnerships in Silicon Valley, and I found myself wondering: why does the library, sitting in the middle of Silicon Valley, still have trouble getting a seat at the table with the AI industry? The incentives feel misaligned at a basic level. Those companies need addictive engagement to sell ads. Libraries exist to empower users to decide for themselves what they read next. How do you bridge that gap?

The thread that followed was more interesting than the question. CNI’s executive director, Kate Zwaard, pointed out that leadership at most AI companies genuinely wants their products to work, and that’s a place where library expertise can get a foot in the door. Someone else observed that venture-backed AI and open-source library infrastructure have become opposing value systems, and being lumped in with the former is itself an obstacle. The panelist himself, Michael Meth, came back later with a fundraiser’s aphorism I haven’t been able to stop thinking about. No one graduates without the library, but nobody graduates from the library. The incentives to recognize the library’s contribution are structurally weak, even when the contribution is real. The harder half of the work is making that contribution legible in terms the other party already values.

I went to sleep on Day One still turning over the same puzzle: where are the incentives actually aligned with library values, and where does the library have to bridge the gap instead?

“Get in the room. If an invitation is required, ask for one.” — Rebekah Cummings, CNI Spring 2026 opening plenary

Four Bridges

Day Two, as it turned out, answered that question four different ways. I walked into four project briefings on AI in academic libraries, and each showed a different pattern of the same bridging move. Pipelines. Strategy. Disclosure. Governance. The same shape, different scaffolding.

Pipelines

The University at Buffalo team spent the back half of 2024 and the first months of 2025 evaluating AI alt-text generation for their digital collections. They did the thing you wish more vendor evaluations did. 45 representative images across 9 categories. Three evaluators. A four-measure rubric (accuracy, relevance, clarity, safety). Three prompt variants per image per tool.

They tested ChatGPT, Gemini, and Claude. They had to drop Gemini early, and later Copilot, because the tools refused to describe people, a refusal mode that blows up a huge portion of any historical photographic collection. ChatGPT beat Claude across most categories. Both struggled most with black-and-white photographs of people, where fine detail carries the semantic load and color cues are absent. The tools hallucinated. They drifted in behavior during the short project timeline.

And yet. For otherwise-undescribed material, for the 1.8 million images of their collections that currently have no descriptions at all, even imperfect AI output that a librarian then revises is a net accessibility gain compared to the status quo, which is nothing.

The presenter’s side project was even more concrete. 18 years of light keeper entries from the South Haven Light Station, 6,351 daily log pages, extracted into searchable text by a pipeline of document segmentation, column detection, and OCR, running on a repurposed flight-sim computer pulled out of a closet. 36,142 columns from newspaper documents in the initial test. The resourcefulness of library infrastructure, visible in a single inference box.

The same argument came at a different scale in the UNC Chapel Hill project briefing. Jason Casden, Amanda Henley, and Rolando Rodriguez described how UNC’s library built a campus AI program without waiting for permanent funding lines or central campus coordination. They stood up a Prompt Lab on LibreChat. Over 2,600 users have touched it since launch.

It runs ChatGPT, Gemini, and Claude through a single interface, with custom agents (a study helper, a research architect), with privacy features built in (conversation deletion, temporary chats), and with a hard constraint on the data it’s currently approved to handle: only their lowest tier of the university’s four-tier classification.

And in a story that deserves more airtime than it got, the library’s usage patterns surfaced a campus-wide Azure content-filtering problem, which triggered a system-wide fix for the researchers who were being blocked. This is the shape of get in the room at institutional scale. The library didn’t negotiate for a seat. It hosted the meeting.

For the 1.8 million undescribed images in UNC’s collections, they built a different pipeline than Buffalo’s. Describe the image. Summarize it down to alt-text length. Score it for risk factors that would flag it for review before it ships. Each step has a narrower scope and an independent quality check. It’s the same lesson as Buffalo’s. Production quality from a one-shot prompt is out of reach. Production usefulness from a chain of scoped steps, with a human in the loop, is not.

What both projects share is a relationship to volume. The institutional interest in AI for libraries is almost always framed as a volume problem. We have 1.8 million images and no time to describe them. We have 34,000 chat transcripts and no capacity to analyze them by hand. But Day Two’s pattern held. Volume is only valuable if human oversight scales with it. The pipeline is how oversight scales.

Strategy

The second bridge is the one I came to the meeting looking for. Maisha Carey and Annie Johnson from the University of Delaware, Ashley Sands and Shawna Taylor from Johns Hopkins, and colleagues from UT Austin walked through three very different runs of the ARL/CNI AI Scenarios method. Four plausible futures organized around two axes of strategic uncertainty, used not to predict but to test strategies against multiple outcomes.

UT Austin ran it as a half-day session with the executive library leadership team only, scoped for shared understanding before broader planning. Johns Hopkins ran the full day-and-a-half with 30+ staff and came out of it with five strategic priorities and a new AI specialist hire brought on April 1st to implement them. Delaware ran a half-day senior-leadership retreat under the worst conditions of the three (no central campus AI coordination, no additional funding, leadership turnover) and still shipped a faculty AI workshop series, central IT collaborations, and the beginnings of an AI Institute partnership.

Two threads from this session kept following me around. The first was Maisha Carey’s reframe of mandatory AI training: include the skeptics in the conversation. Every change either strengthens or undermines organizational trust.

Most AI-adoption programs are built to convert skeptics by force of curriculum, which is a policy for getting compliance rather than learning. The alternative is to build programs around what the ACRL AI Information Literacy Framework calls levels one and two (consideration and knowledge), and to accept informed refusal as a valid outcome. A staff member who has evaluated a tool and decided not to use it is demonstrating competency, not dissent.

That reframe does two things at once. It tells the skeptics they’re being asked to participate in evaluation, not compliance. And it acknowledges that the rejection of a tool, from a position of genuine understanding, is sometimes the right call.

The second thread, which Carey phrased as “inform your refusal” when the speakers turned to what this meant for leaders, named something Day One had also circled. Non-adoption and AI-skepticism are too often treated as deficits to be remediated, when they can also be the informed stance of an expert. The library, of all places, should know how to make room for that stance.

I came out of the session thinking about what a lighter-touch version of Scenarios might look like at less-than-cohort scale. Not the full ARL/CNI cohort workshop. The UT Austin run pointed at the shape. Half a day. A leadership team. An outside facilitator. The ACRL framework for what comes after. Scenarios as a way of informing the refusals and the adoptions both.

Disclosure

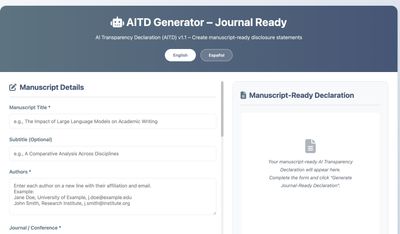

The third bridge arrived as the most intellectually unsettled session of the conference, and the one I’ve thought about most since.

Kari D. Weaver from the Ontario Council of University Libraries presented the AID Framework, a disclosure standard originally developed at Waterloo in 2024 after graduate students had theses rejected despite citing AI use appropriately.

Natalie Meyers, AI Researcher in Residence at CNI and ARL, connected it to adjacent work. She mapped it against IBM Research’s AI Attribution Toolkit, Kaleb Loosbrock’s “nutrition label” approach, and the IAB’s Transparency and Disclosure Framework.

And Sergio Santamarina, joining by video from Argentina because he could not travel to the United States, showed his Spanish-language AI Transparency Declaration. It’s a client-side HTML tool that works entirely offline and has been localized into multiple languages. Sergio offered the best metaphor of the session for what AI actually is: “this technology is a tool, but it’s not a tool like a hammer or a screwdriver. It’s more like a car.”

All three presenters were working toward the same goal. Make AI use legible in the artifacts it produces. Each of them has built something workable.

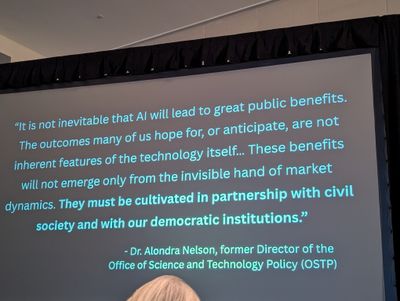

And yet the empirical evidence they closed on, from a 2025 PNAS paper with four experiments and 4,400+ participants, was that disclosing AI use damages professional reputation. Perceived competence drops. Perceived motivation drops. The penalty is real, and it’s paid by the person being honest.

Then the other shoe. A second line of research finds people perceive AI-generated content as more objective than human-generated content, not less. Call it the “AI heuristic” trap. So readers apply heavier skepticism to the disclosed work and lighter skepticism to the undisclosed work. Transparency ends up actively rewarded in the wrong direction.

That finding stayed with me. The framework designers I was listening to are thoughtful people who know the research. None of them were claiming disclosure solves the problem. They were arguing for the structures that have to sit around disclosure. Quality signals paired with disclosure (“AI-drafted, human-verified”). Social norms that make AI use expected rather than remarked on. Legal and regulatory floors that remove individual disclosure as a reputational liability. The tools were proposed as floors, not solutions.

I posted a version of this question into the backchannel, in the specific direction of opt-out consent. If people who care enough to opt out of being included in training data are systematically different from people who don’t (more technical, more privacy-aware, more institutional), then “consent” at scale is a sampling-bias generator. The institutions that care most about AI ethics may be the ones most underrepresented in the training data that shapes how AI behaves around their concerns. Transparency and consent are each trying to carry a load they aren’t structured to carry. Libraries have been working on consent and attribution for a century. They have something to offer in how these mechanisms actually function.

Which loops back to the Silicon Valley question from Day One. The incentive misalignment is real, and the Day Two disclosure session gave it a specific mechanism. The AI layer’s output is trusted above human judgment by default. The transparency mechanisms designed to correct for that don’t, because they’re structurally asymmetric. This is a problem libraries are positioned to understand. It’s also, uncomfortably, a problem no single library is positioned to solve.

Governance

The fourth bridge was never a dedicated session. It sat underneath all the other sessions. Seth Porter’s lightning talk on Day One had named it explicitly: the library as neutral broker for high-cost, steep-learning-curve emerging technology. Faculty submit proposals, a committee reviews, the library allocates compute, the library tracks cost. The governance layer is what makes expensive infrastructure accessible at scale, and the library is structurally positioned to hold it because the library’s incentives actually align with broad access. Most other units on campus have reasons to hoard.

UNC’s Prompt Lab is the same pattern at a larger scale and a cheaper underlying technology. Faculty, staff, and students get a shared platform. Custom agents live at the institutional layer. Usage data surfaces institutional problems to be fixed. Cost gets spread across the campus rather than trapped in individual budgets. And critically, the library doesn’t compete with central IT. It rides central IT’s infrastructure, builds the tools and instruction layer on top, and distributes expertise back out. That’s a governance pattern, not a product.

Put the four bridges side by side and they compose. Pipelines make AI outputs usable. Strategy keeps institutional direction testable under multiple futures. Disclosure makes use legible. Governance makes access equitable. Each one is a pattern libraries are already, structurally, good at.

What I brought back to Baker Library at Harvard Business School isn’t whether libraries have a role in campus AI work (Cummings settled that on Day One). It’s which of the four bridges each library should be building, given its institutional context.

Data governance is the conversation I find myself most drawn into. The library’s structural position carries weight there for all four reasons at once. Close to the licensing terms AI touches. Holding the metadata and descriptive infrastructure AI has to use. Close enough to faculty to feel the short-term pressure. Structural enough to be in the room for the long-term conversation.

The disclosure paradox is not hypothetical for any research institution whose faculty are already using these tools in teaching and writing. The pipeline lesson applies at library scale just as cleanly as it applies at campus scale. The governance pattern scales down to a single library the same way it scales up to a whole campus.

I came home with a list of small experiments worth running and a slightly sharper sense of what showing up actually means. The work is showing up with what libraries already have, and making it usable by the other people in the room.

A non-AI aside, because a conference is also a place. The CNI team makes this meeting welcoming and keeps the conversations going. The break food is good. The reception has a real assortment. The Slack backchannels are thoughtfully moderated.

And someone at the conference made me try my first Utah dirty soda. For the uninitiated: a soda base, a row of flavored syrups, and whatever mix-ins you can justify.

The closing plenary was delivered by Manish Parashar, the University of Utah’s inaugural Chief AI Officer and Executive Director of the SCI Institute (watch the full plenary on YouTube). I wasn’t in the room, but one line from the session showed up in the backchannel and has been rattling around in my head since. Only about 1% of datasets in DataCite have at least one citation. If the library’s job is to make research findable and creditable, that number is a measurement of the gap the next decade of infrastructure work has to close. It’s also a reminder that the disclosure and attribution work libraries are debating for AI has a much older sibling in the data-citation work libraries have been slowly winning for years.

The disclosure session left me with a narrower, sharper version of the Day One puzzle. What does post-disclosure information literacy look like? If transparency is structurally insufficient as a warning mechanism, if it gets gamed and penalized and sometimes inverted by the AI-heuristic trap, then the library’s classical information literacy work has a new job description. Teaching readers to evaluate claims when disclosure is missing or performative. That’s a curriculum we don’t yet have, and it’s the kind of thing libraries, plural, tend to figure out together. Which is also why I spent a couple days in Salt Lake City.

Get in the room. If an invitation is required, ask for one.

If any of these threads pulled, the references are folded below.

Resources from CNI Spring 2026

Conference

Disclosure tools and frameworks

- AID Framework (statement builder) — Kari D. Weaver, OCUL/Waterloo

- Weaver, The AID Framework — C&RL News

- IBM AI Attribution Toolkit

- Kaleb Loosbrock — AI Disclosure Drafting Tool

- IAB Transparency and Disclosure Framework

- Sergio Santamarina — AI Transparency Declaration (Codeberg repo)

Strategy and instruction frameworks

- ARL/CNI AI Scenarios — the four-future method run at UT Austin, JHU, and Delaware

- ACRL AI Information Literacy Framework

Projects and platforms in the wild

- LibreChat — open-source multi-model chat platform UNC’s Prompt Lab runs on

- UNC Library Generative AI Research — Casden, Henley, Rodriguez

- Virtual reference transcript-coding pipeline — Arizona, Colorado State, Kansas, Utah State team

- JSTOR Seeklight — AI metadata generation tool, the UT Austin charter year evaluated

- UC Berkeley Ag Extension collection — early Seeklight deployment

- Montana State Library AI Lab

- AWS Braket — quantum computing platform behind Seth Porter’s library-as-broker model

Research and reading

- Rasooli et al., Evidence of a social evaluation penalty for using AI — PNAS, 2025

- Hanegan & Rosser, Generative AI and Libraries: Claiming Our Place in the Center of a Shared Future — ALA Editions

- DataCite — referenced in Manish Parashar’s closing plenary on dataset citation